准备工具

- 一部安装好hadoop和mysql的虚拟机

- Hive压缩包

上传压缩文件并解压

tar -xvf apache-hive-2.3.3-bin.tar.gz

配置hive的环境变量

export HIVE_HOME=/opt/software/hive/apache-hive-2.3.3-bin

export PATH=$PATH:$HIVE_HOME/bin

修改hive的配置文件

<xml version="1.0" encoding="UTF-8" standalone="no">

<xml-stylesheet type="text/xsl" href="configuration.xsl">

<configuration>

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://192.168.200.161:3306/hive</value>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>cqrjxk39</value>

</property>

<property>

<name>hive.metastore.schema.verification</name>

<value>false</value>

</property>

</configuration>

在mysql上创建一个数据库hive

将mysql的连接驱动放到/opt/software/hive/apache-hive-2.3.3-bin/lib下

格式化

schematool -initSchema -dbType mysql

启动hive

创建表

create table students(id int,sname string,age int) row format delimited fields terminated by ',';

往表中添加数据

load data local inpath ‘/students.txt’ into table students;

创建临时函数

<dependency>

<groupId>org.apache.hive</groupId>

<artifactId>hive-exec</artifactId>

<version>2.3.3</version>

</dependency>

import org.apache.hadoop.hive.ql.exec.UDF;

public class MyUDF extends UDF {

private static Map<String,String> map = new HashMap<String, String>();

static {

map.put("张三","zhangsan");

map.put("李四","lisi");

map.put("王五","wangwu");

map.put("赵六","zhaoliu");

}

public String eva luate(String name){

if (map.get(name) != null){

return map.get(name);

}else {

return "admin";

}

}

}

将该项目打成jar包,传上linux服务器

加入jar包

add jar /hive2-1.0-SNAPSHOT.jar

创建临时文件

create temporary function u as ‘com.hive.MyUDF’ ;

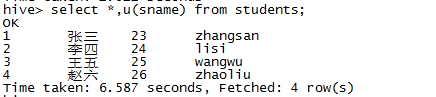

查询: