|

567]

elif groups == 2:

out_channels = [-1, 24, 200, 400, 800]

elif groups == 3:

out_channels = [-1, 24, 240, 480, 960]

elif groups == 4:

out_channels = [-1, 24, 272, 544, 1088]

elif groups == 8:

out_channels = [-1, 24, 384, 768, 1536]

data = shuffleUnit(data, out_channels[stage - 1], out_channels[stage],

'concat', groups, grouped_conv)

for i in range(stage_repeats[stage - 2]):

data = shuffleUnit(data, out_channels[stage], out_channels[stage],

'add', groups, True)

return data

def get_shufflenet(num_classes=10):

data = mx.sym.var('data')

data = mx.sym.Convolution(data=data, num_filter=24,

kernel=(3, 3), stride=(2, 2), pad=(1, 1))

data = mx.sym.Pooling(data=data, kernel=(3, 3), pool_type='max',

stride=(2, 2), pad=(1, 1))

data = make_stage(data, 2)

data = make_stage(data, 3)

data = make_stage(data, 4)

data = mx.sym.Pooling(data=data, kernel=(1, 1), global_pool=True, pool_type='avg')

data = mx.sym.flatten(data=data)

data = mx.sym.FullyConnected(data=data, num_hidden=num_classes)

out = mx.sym.SoftmaxOutput(data=data, name='softmax')

return out

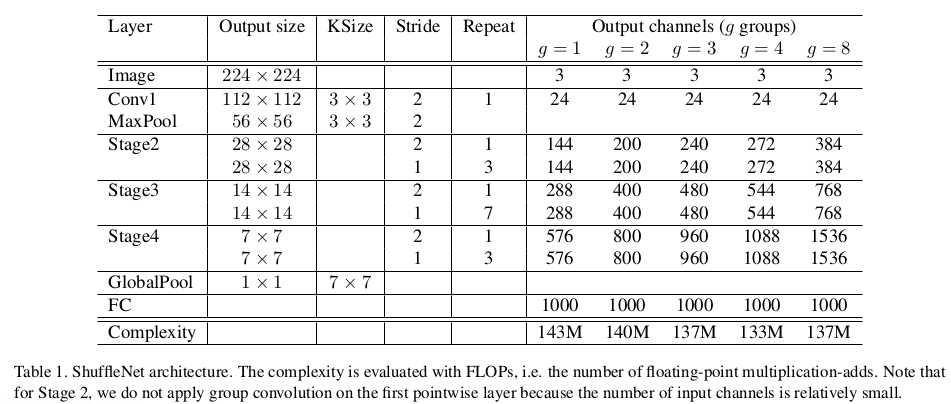

这两个函数可以直接得到作者在论文中的表:

图7

结果比较

论文后面用了种实验证明这两个技术的有效性,且证实了ShuffleNet的优秀,这里就不细说,看论文后面的表就能一目了然。

|